In one corner of my examination room, there is a small cactus.

It sits quietly in a spot that patients pass by absentmindedly while waiting for their appointments. It has no name and does not stand out much.

But to me, this cactus brings YouTube to mind.

I do not actually run a YouTube channel, but its presence feels oddly similar to a cactus: something that grows on its own and, before long, has become part of the exam room.

When I have a brief break after seeing patients, my finger somehow ends up tapping the YouTube icon. As I unconsciously follow recommended videos and spend time there, I realize that I have wandered into the "garden of the algorithm."

Not long ago, the YouTube algorithm must have categorized me as an "obsessed dog video watcher," because I fell into a pit of dog content.

At first, I had only watched a few videos casually, but at some point my YouTube home screen was filled entirely with dog videos, and other content gradually disappeared.

In the end, it was only when my wife asked, "Do you like dogs that much?" that I realized how much I had become someone else inside YouTube.

In real life, I am not like that, but within the algorithm, I had already become a "dog fanatic."

Since I was young, I have always been the type of person who, once I start digging into a topic, goes all the way to the end. In elementary school, I was a dinosaur expert; in middle school, I collected comic books; and in college, I became fascinated by hair and eventually chose the path of a doctor.

And now, I live as a doctor treating hair loss.

This tendency helps when deeply exploring a specialty, but I often feel that in the world of algorithms, it can actually become a disadvantage.

Even a little interest is enough for the algorithm to immediately push me into a specific category and prevent me from ever escaping it.

In the past, we read the world through newspapers.

News provided by major media companies such as JoongAng, DongA, and Chosun was treated almost like truth.

Later, portals like Naver and Daum took over that role.

News appearing at the top of search results was regarded as truth, and click counts became indicators of interest and trust.

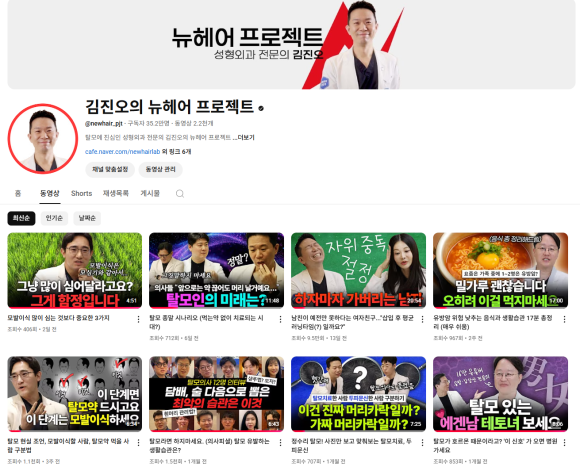

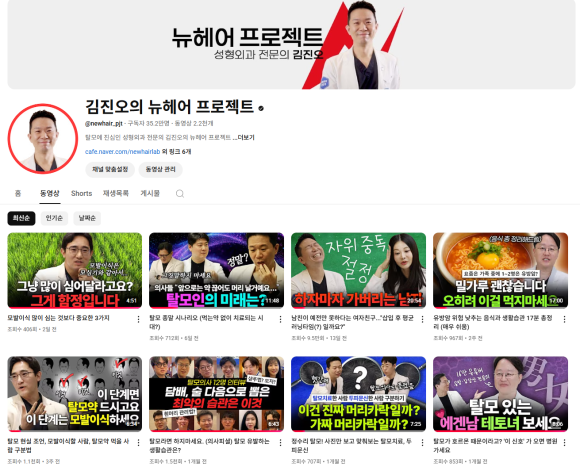

And now, YouTube has taken that place.

The problem is that personalized recommendation systems are increasingly driving people into narrower islands of information.

Not long ago, while talking with a friend about politics, I was surprised by how different our views were.

My friend asked in genuine confusion, "What do you mean? Haven't you heard about this? It's all there if you just go on YouTube," but I had never once come across those videos or opinions.

We were living in completely different algorithmic universes.

Fortunately, we were able to respect each other's thoughts, so there was no major problem. But if that had not been the case, political differences might have led directly to personal conflict.

This phenomenon will only become more severe in the future.

Artificial intelligence systems such as ChatGPT are already providing highly personalized search results and customized content, and their accuracy and convenience continue to improve.

But behind that convenience lies the risk that our thinking becomes increasingly narrow and that we fall into confirmation bias without even realizing it.

I felt this problem once again during a recent consultation with a patient.

That person had watched countless videos about hair loss on YouTube, but was instead being swayed by false information and feeling confused.

Most of the content the YouTube algorithm had provided consisted of extreme solutions or baseless claims, and in the end I had to go through each misunderstanding one by one in the exam room.

After the consultation ended, the person left looking relieved.

Through this experience, I was reminded once again of something.

What algorithms provide us is not "truth," but only the kind of story we are likely to like.

The world is far more complex and layered.

YouTube's algorithm may satisfy our interests, but it does not make us better people.

So I consciously try to look for opinions completely different from my own.

It can feel unfamiliar and uncomfortable, but through that process I learn humility, realizing that my own thoughts are not always correct.

In the end, there is only one thing we need to do.

Do not remain only within the information provided by the algorithm; instead, seek out different perspectives and opinions on your own and keep your ears open.

And always remember that there can be worlds that are different from your own.

Harmony without sameness.

As this phrase suggests—meaning to achieve harmony while remaining different—the attitude of respecting different opinions while coexisting peacefully.

I think this may be one of the values most needed in our time.